Guide to Understanding Video Sources, Part 2 – Capturing Videotapes

Before sitting down to capture or encode video, it is important to understand the source. What exactly is “source”? Source is the TV broadcasts, VHS tapes, video cameras, Internet downloads, etc. Every source has its own unique properties that must be understood in order to edit and convert it to a high-quality DVD. Video is all about decisions. It is more art than science. Understanding your source will allow you to select proper encode/capture resolutions, the proper interlace settings, and proper color management techniques. Several concepts are explained in this guide:

- Theory vs. application,

- Analog sources,

- Digital sources,

- Interlace vs. Progressive vs. De-Interlace,

- Black and white versus color,

- Aspect ratio,

- Colorspace compression,

- Overscan, masking, cropping,

- and some playback considerations

NOTE: Although this site aims to avoid technical jargon, this page will contain several new terms that will re-appear all the time throughout the DVD creation process. All attempts are made to explain video theory and technological terms while using normal language, when available. Both info tables are thick with jargon.

Theory vs. Application

Understand that there is a difference between THEORY and APPLICATION. It is a contest between what is supposed to happen versus what really does happen. There are math formulas out there that can precisely dictate what a digital resolution SHOULD be for an analog source. However, in PRACTICE, these numbers are never reached. These numbers will be mentioned below, but should not be used for your calculations when deciding on a resolution for your new DVD. Why is there a gap? It’s quite simple, really. Analog mediums, much like the analog information stored on it, is imperfect. These math equations are based on varying ideas, including TVL and its 4:3 aspect. There are also variations to include concepts like the Kell and Nyquist principles. All in all, this is best left to scientists that like to argue. It has no real concern to the hobbyists and non-pro videographers. Digital is an exercise in precision, while analog was an exercise in controlled chaos.

Analog Source Resolutions

There are only two kinds of source: analog and digital. Analog source comes from tapes or analog broadcast signals, and digital source comes from computers, digital broadcast signals or digital cameras. Analog source cannot be measured in the same terminology as digital source. While resolutions, bit-rates, audio levels, etc, are rigid measurements in the digital world, this does not hold true in the analog world. Analog sources are measured differently, typically using various power and output measurements. The following table uses approximate digital equivalents for the various analog sources. The table presents both IN PRACTICE and IN THEORY numbers for the digital equivalents, with theory being the higher of the two. Information presented on this chart currently reflects only the USA NTSC video standard. PAL and SECAM standards (outside the USA) may be added with a future updates. In most cases, you can simply substitute x480 with x576 and get the PAL variants.

| Format: | Analog Measurement: | Digital Equivalent: | Audio and Other Info: | Suggested Capture Size: |

|---|---|---|---|---|

| Broadcast antenna television and analog cable | Up to 4.2 MHz, drop-frame 60hz power cycle, 300-340 lines of resolution, interlaced | 350x480 to 400x480 interlaced | 29.97fps NTSC, audio approximately 44.1kHz, 4:2:2 sampling | 352x480 |

| Satellite (DSS,DVB) and digital cable | Interlaced digital signal, encrypted | 352x480, 412x480, 480x480, 544x480, 640x480, 704x480, 720x480, and many others. Depends on provider and channel | 29.97fps NTSC, audio can be MPEG audio or Dolby AC3 audio, often at 44.1kHz, 4:2:0 and 4:2:2 sampling | 352x480 or 704x480 or 720x480 |

| VHS | Up to 3.0 Mhz (very weak), 240 lines of resolution, interlaced | 250x480 to 300x480 interlaced | 29.97fps NTSC, HiFi audio about 44.1kHz, 4:2:2 sampling | 352x480 |

| Super VHS (S-VHS) | Up to 5.0 Mhz (very strong), 400-425 lines of resolution, interlaced | 400x480 to 500x480 interlaced | 29.97fps NTSC, HiFi audio about 44.1kHz kHz, requires S-VHS player or SQPB, 4:2:2 sampling | 352x480 or 704x480 or 720x480 |

| Super VHS ET (S-VHS-ET) | Between VHS and S-VHS measurements, interlaced | Between 250x480 and 500x480, normally 350x480 | Same as S-VHS, use high grade VHS tapes only, 4:2:2 sampling | 352x480 |

| Betamax | 250 lines of resolution, interlaced | Similar to VHS | This is not the same as Betacam SP, 4:2:2 sampling | 352x480 |

| Betacam SP | Up to 7.5 Mhz, 360 lines of resolution | 400x480 to 500x480, interlaced | High bandwidth used for color retention and saturation | 704x480 or 720x480 |

| 8mm | Similar to VHS, interlaced | 270x480 to 300x480 | 29.97fps NTSC, 4:2:2 sampling | 352x480 |

| Hi8 | Similar to S-VHS, interlaced | Similar to S-VHS | 29.97fps NTSC, 4:2:2 sampling | 704x480 or 720x480 |

| Digital 8 | Interlaced digital DV signal | DV 720x480 | This is digital data on an analog tape, 4:1:1 or 4:2:0 DV sampling | N/A DV 720x480 |

| Laserdisc | Up to 5.0 Mhz, 400-425 lines of resolution, interlaced | 528x480 and 544x480 | Analog video on a digital media. Audio can be stored in an analog track or in a digital track (Dolby AC3, DTS). 29.97fps NTSC, 4:2:2 sampling | 704x480 or 720x480 |

Analog Source Notes:

- Digital cable: Not all digital cable is a digital signal. Sometime it is a digitally-compressed analog signals being decompressed by the digital cable box. Check with your cable provider to see what you have. Although satellite systems are digital signals, encryption prevents them from being downloaded. The only way to legally record digital satellite is by using analog methods. Satellite is either on or off, and cannot be harmed by static or other noises that affect cable or broadcast. DirecTV and DISH Network typically use 544×480 and 480×480 resolutions.

- VHS/S-VHS History: The Video Home System (VHS) format was invented by Victor Company of Japan (JVC) in 1976. Super VHS (S-VHS) format is a 1987 JVC invention that made use of s-video (“separated-video” cable that separates luma from chroma) and incorporated a denser particle mixture on the tapes.

- S-VHS-ET and SQPB: Although some VHS units allow SQPB (S-VHS quasi-playback), it is still a VHS-quality signal being broadcast from a VHS player. VHS VCRs are unable to extract all the S-VHS information, so use an S-VHS player for S-VHS tapes. S-VHS-ET is the official name given to an old cheap trick used by many poor or cheapskate S-VHS users. In the old days, we would drill or burn holes in a VHS cassette housing, as S-VHS tapes have holes that VHS tapes do not, thus allowing players to know the difference in the equal-size tapes. In the past few years, JVC labeled it S-VHS Extended Timebase (ET mode) and has put the option onto its recorders. A VHS tape actually has more signal bandwidth available than is used by VHS players. However, it is not quite as good as a regular S-VHS tape. I highly suggest JVC, TDK EHG or any broadcast-quality VHS tape for S-VHS-ET use.

- Satellite TV: Satellite television is a special format (DVB/DSS) of MPEG-2. Satellite centers (like the DirecTV location in Colorado, or the DISH centers in Wyoming and Arizona) encode the video to MPEG-2, uplink it to a satellite, and it is then resent to Earth and intercepted by the digital dishes on our roof. Because the original digital files cannot be accessed (due to encryption), footage must be captured from the analog output given off by the satellite receivers, and this is why “digital” satellite has been included in this analog source list. It is often higher quality than cable or broadcast because it is a digital signal, and cannot be harmed by static or other noise than affects cable and broadcast, and therefore appears cleaner and crisper. Satellite signals are either on or off (excluding macroblocking and freezing due to signal interferences as caused by weather or aerial objects). Some DVB and FTA signals can be captured directly with acquisition cards (not really capture, more like downloading data). This is because the information is not encrypted. More information on digital satellite broadcasts can be found at CoolSTF.com and Henry-Davis.com.

Digital Source Resolutions

Digital source is already digital. It has rigid limits, specifications of acceptability, and resolutions and bit-rate. Everything about a digital file can be quantified and qualified, unlike analog. Digital formats like VCD and DVD must adhere to certain specifications. This chart is completely technical, contains all information about the spec. Newbies may not understand all of this information just quite yet, but it will be important to refer back to when capturing, encoding and authoring.

This information appears in this “understanding your source” guide mainly for comparisons purpose to the analog chart above, as well as for those that desire to edit or re-convert video already in the digital domain.

| Video format | File Format | Resolutions | Video bit-rates | Audio specs |

|---|---|---|---|---|

| DVD-Video | NTSC (4:3): 352x240, 352x480, 704x480, 720x480, NTSC (16:9 widescreen): 704x480, 720x480,PAL (4:3), 352x288, 352x576, 704x576, 720x576,PAL (16:9 widescreen): 704x576, 720x576 | Up to 10.08Mb/s total combined bitrate. Up to 9.8Mb/s max video bit-rate. CBR, CVBR, or VBR | (1) AC3 Dolby Digital stereo or surround. Average AC3 stereo is 192-384k. Average surround is 448k or higher. (2) LPCM uncompressed 1536k WAV/AIFF. (3) DTS, same bit-rate as AC3.,(4) MPEG Layer II (MP2) stereo, 192-256k bit-rate, not officially supported in the spec | |

| DVD-Video | MPEG-1, sequence headers at each GOP, 4:2:0, MP@ML | NTSC (4:3): 352x240, PAL (4:3): 352x288 | Between 1.150Mb/s and 1.856Mb/s CBR video bitrate | Same audio spec as MPEG-2 version |

| VideoCD (VCD) | MPEG-1 (4:2:0) | NTSC (4:3): 352x240, PAL (4:3): 352x288 | Exactly 1.150Mb/s CBR total video/audio bit-rate | Exactly 224k MPEG Layer II (MP2) audio |

| Super VideoCD (SVCD), Chaoji VCD, China Video Disc (CVD) | MPEG-2 (4:2:0) | NTSC: (4:3) 480x480, PAL (4:3): 480x576, CVD uses 352x480 or 352x576 resolution variant | Up to 2.520Mb/s VBR max, total combined video/audio bit-rate | Exactly 224k MPEG Layer II (MP2) audio |

| XVCD | MPEG-1 or MPEG-2, not an official "standard" | Any, standard disc and DVB/VR resolutions suggested | Any, but max 2.520Mb/s is suggested, usually VBR | Any, MP2 suggested |

| MiniDV, DV25, consumer DV | DV25 codec AVI, 4:1:1 (USA) or 4:2:0 (PAL) | NTSC (4:3): 720x480, PAL (4:3): 720x576 | 25Mb/s combined audio/video (5:1 compression) | LPCM 1536k uncompressed audio |

| MPEG-4, XVID, DIVX | FourCC AVI codecs, often used to share files online | Varies, but standard resolutions includes 640x480 and 512x384 for 4:3 content | Varies | Typically AC3, OGG, MP3 and MP2, stereo or mono |

| Other formats | Digital video has an near-infinite amount of bit-rate, resolution and format combinations. Other formats include RealMedia, QuickTime and Windows Media Video (WMV). | Depends on the format | Depends on the format | Depends on the format. Many formats have dedicated audio streams. |

How to Analyze Digital Files

If you’re not sure what kind of file you have, analyze the AVI codec with the Gspot Codec Information Appliance (v2.7). As long as the file is not corrupted, it will reveal every aspect of the file. In the example below, GSPOT show the video file to be an MPEG-2, interlaced (I/L) top field first (TFF), 4:3 NTSC 352×480, with 256k AC3 audio, and that codecs are installed on the computer, allowing players and encoders to identify the source.

Interlace vs. Progressive vs. De-Interlace

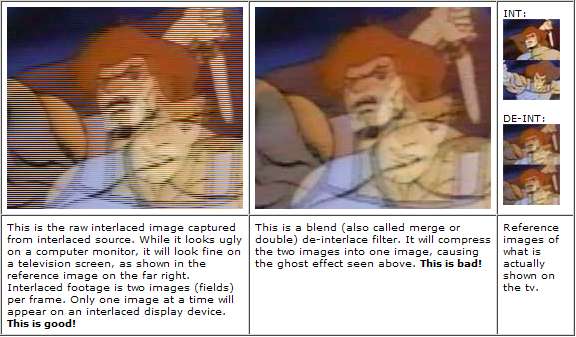

What is interlace?Interlacing is the method used to play back footage on a television set. Two images are simultaneous shown per frame, drawn in a comb method (see images in the table below). As one image is being drawn, the other is fading away, awaiting the redraw cycle by the electron beam. So while it is about 30 interlaced frames per second, it’s really about 60 images per second in the weaved pattern. Interlace “frames” are technically referred to as “fields”. This comb pattern is only visible on a progressive display device. Since most people plan to view the final video on television, it must be interlaced to retain quality. Most sources in use today are interlaced. Unless it was created on a computer or is an official release of a film, then odds are that the source is interlaced. All broadcast television, cable, satellite, VHS, S-VHS, Beta, Laserdisc, 8mm, Hi8, Digital8 and most DV sources are interlaced. In the digital world, only some AVI MPEG-4 codecs and MPEG-2 support interlace. The interlace barrier for MPEG-2 is at the approximate x280 resolution. VCD and other lower-end formats do not have the luxury of interlace.

What is progressive? Separate images shown in progression to give the feel of motion. All film source is progressive. Film is nothing more than a series of still images shown one after the other. This is progressive.

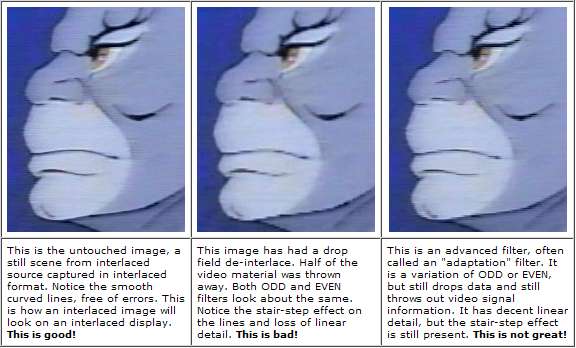

What is de-interlace? De-interlacing is the process of converting interlaced source into progressive source. It is commonly referred to as de-interlaced source, but that is a misnomer, though common lingo of the day among hobbyist videographers. De-interlacing loses data. It’s that simple. A de-interlace basically throws away parts of the video signal or blends it together with other parts of the video signal. It destroys interlaced footage. The only use gained by de-interlacing is to remove combs on a progressive display that has no access to on-the-fly de-interlacing software or hardware. My biggest pet peeve is people that mindlessly de-interlace interlaced footage. Too many users just hit the “go” button in their software, and never consider the source, the capture method or the actions of the software. Recording in the wrong format will look bad and lose quality. There are only a few situations where de-interlacing is necessary, and most DVD-making hobbyists will never find themselves in those situations, especially since the current trend is converting old VHS to DVD or recording from television directly to DVD.

There are several rules to follow:

- Always match your source when capturing. This means capture interlaced footage as interlaced. And capture progressive source as progressive.

- Always match the output device when encoding. This means you should encode for viewing on the desired device. Encode interlaced for viewing on an interlaced viewing device (TV). Encode progressive for viewing on a progressive display (computer monitor). Most people should be capturing and encoding interlaced!

There are several ways to accomplish a de-interlace:

- Drop field. This is often known as the EVEN or ODD de-interlace filters, as it drops the EVEN or ODD fields from the image.

- Merge fields. This merges too fields together. This is often called DOUBLE or BLEND in software.

- Bob. This is not really a de-interlace filter at all, but rather a method of playing all 59.94 fields per second, one after another on high-frame-rate progressive devices. However, without proper aspect being forced, it will revert to half-height images because a field is only half of the frame. The ATI All In Wonder card with ATI MMC use the “bob” filter during capture preview and playback of live source (the aspect ratio is maintained to avoid re-size).

- Adaptive. This is a method that removes the comb-teeth effect, but still has drawbacks with stair-stepping and occasional blurring. Software players like WinDVD and PowerDVD use this filter.

- Telecine. Telecine is a fake interlace used to make 24fps film appear normal on 29.97fps interlaced display devices like television sets. It can easily be removed with an IVTC (inverse Telecine) filter found in many software encoders. This will often restore the video to its original progressive state, as well as remove extra frames inserted to the Telecine process. However, this being said, not all movies started as progressive film source. IVTC will often still leave de-interlace artifacts, just not as many as a raw de-interlace. And once source has gone onto analog tape or broadcast, it is interlaced from then on. The telecine trick mainly works on converting existing DVD footage.

NOTE: The following images illustrate bad deinterlacing techniques:

Few more notes on interlace:

- Progressive cameras. Though typically interlaced, some video cameras can actually shoot progressive video. And even in those cases, the camera normally comes with interlaced as the default shooting method. It requires you to knowingly alter the camera to shoot in some progressive mode. Be aware of the frame rates too! Do not assume the progression is full NTSC or PAL fps! And other “progressive” devices have been known to blend fields to get the progression!

- A computer monitor is a progressive display device. Only encode progressive if you only plan to view it on a computer or plan to create a progressive format like VCD.

Common questions and misconceptions:

Q1: I should de-interlace because I want to watch it on my computer too?

A1: No. This is not true. All software DVD players come equipped with “bob” or “adaptive” de-interlace filters. You’re better off with playback software that uses these instead of using one of the odd/even or blend/merge/double filters.

Q2: De-interlace looks just fine on my interlaced footage?

A2: Not only is this a very subjective opinion, but you will find yourself in the minority with that outlook on video. As shown in the example above, de-interlacing has a very negative affect on video quality.

Q3: My software gives no interlace/de-interlace option, so it must be fine?

A3: While most good capturing and encoding software allows for some choices in de-interlace or interlace, some do not. I suggest you carefully learn which method it uses, or suggest dropping it for better software. Software like WinDVR and PowerVCR II are notorious for not giving users an option and capturing with merged fields. The ATI MMC is known to come setup with drops, but can easily be changed to interlace in the software options.

Q4: My software only gives an option to “de-interlace” so what is it doing ?

A4: Almost all software that merely gives “de-interlace” as the option will drop the even field, with few exceptions to the rule.

Q5: Want to learn more about advanced interlace theory? (Not an easy read for novices.)

A5: Visit 100fps.com

B&W vs Color

When capturing black and white source, it is highly suggested that you either purchase a color-removal device like the Sima SED-CM, or use a proc amp. Or if available in the capture software (or at the encoding stage), remember to de-saturate the color 100 percent. Color information is still present in B&W analog material. For the cleanest, most true conversion, remove the color. Modern VHS tapes and analog broadcasts tend to add a hue to the B&W footage, often giving it a nasty green, red or yellow tint. This is because analog color tv is actually just a hack/variation of the original black and white tv sets. NTSC television today is not really true color, but color squeezed into a black and white signal. PAL tv can have the same problems, though for different reasons (source). Old film naturally turns brown or yellow with age, and can still be seen on sloppy conversions.

Aspect Ratio

Aspect ratio is the dimension of length times width (L x W = AR). All fullscreen video for tv should playback at 4:3 ratio (widescreen content is usually 16:9 aspect). This meaning the image is 4 units wide by 3 units high, measured with a ruler. For example, on a 19-inch tv set (which was measured diagonally), that would show an image of 16 inches wide by 12 inches high. It will fill the tv screen with video. 16:12 is a ratio of 4:3. In this case, the 4:3 units were 4 inches. This may also be referred to as DAR, or Display Aspect Ratio. Although a resolution like 480×480 may seem to infer a square image, the pixels are rectangular and not square. This is where the confusion often lies. Thus 480×480 is still a 4:3 image, when viewed on screen. Same for other resolutions. Although 720×480 may look long and flat, or 352×480 tall and skinny, both use pixel shapes that display properly at 4:3, assuming the player respects the 4:3 information.

Colorspace Compression

This is actually quite a bit more complex of a topic, but for the purpose of introducing new concepts, this will suffice:

Digital video image data is stored in YUV format. Each of these contains data (the Y, the U and the V), though not required to contain the same amount of data. The “Y” is called luma, and stores the main information. The “U” and “V” is chroma and provides additional color information. You may also see YUV referred to as YIQ or YCrCb.

The compression rate of YUV is represented as Y:U:V in numbers. Some common ones:

4:4:4 = Uncompressed. Equal number of samples of Y, U and V.

4:2:2 = U and V are horizontally half of Y. Used for VHS/cable/broadcast, etc.

4:2:0 = U and V are both vertically and horizontally half of Y. Used for DVD MPEG and DVB.

4:1:1 = U and V are both vertically and horizontally one-quarter of Y. Used in NTSC consumer DV.

Human eyes give the most important to the first two numbers (1ST:2ND:3RD). The 3RD digit is not terribly important, and is why it can be compressed to a ZERO value with little visible disadvantages. The 4:1:1, however, does tend to compress color information in the visible domain, and is why it’s often disliked by more advanced videographers and professional video editors. It’s a consumer format, and definitely not a perfect one.

This is being added to this guide solely to demonstrate the concept, as well as suggest high quality editors seek formats other than consumer DV25, either for shooting, or as the conversion method. Many of the newer consumer camera come with 3CCDs (like Panasonic) or additional filters (like Canon DIGIC technology) to suppress color compression loss, so most consumers should be happy. For an analog-to-digital conversion method (using devices like the Canopus ADVC-100), DV is never suggested, far too lossy.

Overscan: Masking and Cropping

What is overscan? A tv set only show about inside 93 percent of the available image. The rest is hidden behind the box surrounding the tube. This is called overscanning. Broadcasters and others in video know full well that this happens, so rest assured that you are not missing much. In fact, the overscan area often contains little more than black bars or video errors, and should be seen as a courtesy moreso than a hindrance. Recorded formats (VHS, S-VHS, etc) suffer the most, in terms of overscan noise. Broadcast formats often have black bars in the overscan. Most satellite streams have noise in the upper overscan, visual residue from non-video parts of the data stream.

You never see this portion of the image on a tv set, however computers have no such mechanism. So, sadly, many people feel they must “crop” or “mask” out the noise. In most cases, it is simply a lack of knowledge from amateurs online, thinking they have a “bad quality” signal. So they seek to mask it, which is fine. Or more often, crop it, which is undesirable.

In this image, the BLUE LINE denotes the overscan boundary. Only the data inside of the rectangle will be visible on a tv set. The data outside is not seen on the tv, though it will likely be visible on a computer screen. Note the timebase flaws at the top of the signal (skewed video), the missing image data on the left side, and the crawling errors at the bottom of the screen. Again, none of that will be visible on a tv set, though it will be encoded into the video file. Also know that every tv will differ in the exact overscan position. Some may be slightly larger, slightly smaller, or even positioned more to one side.

What is masking? Masking is a photo/design term, and means to “cover something up”. In video, this usually means to put black over a portion of the image, often to hide noise. ATI MMC has a useful feature (as discussed in the ATI AIW capture guides) that masks while capturing. Encoders like Procoder and TMPGENC have mask options in the crop filters. And NLE software like Adobe Premiere have masking via the “clip” tools. Though masking serves no visual benefit when watching on tv, it can cover up undesirable noise when viewed on a non-overscan device (like a computer monitor), without a harmful crop needing to be performed. It may also help with bit-rate allocation, since the bit-rate-gobbling noise is no longer present. MASK ONLY WHEN NECESSARY!

What is cropping? Cropping is the often-harmful practice of chopping off part of the picture. For example, if you started out with a 400×300 video, and decided to crop 10 pixels off each side, and left it at 380×280, then it would still look fine. But that is typically not what happens. More often, the person crops 10 off each side, then allows an encoder to stretch the remaining image back to the 400×300 size, which blurs and distorts the image in varying ways. Many people like to download video clips and encode them to DVD for tv viewing, only to discover part of the signal missing. This is due to cropping done at the time of encode, which means black borders must be added back to the video to restore the overscan (this is yet another reason why downloaded Internet files are low quality and terrible to work with). TRY TO NEVER CROP VIDEO FILES!

Playback Considerations

Much like source, there are also only two kinds of playback devices, analog and digital. Analog playback devices are normally tv sets and projection screens. Digital devices include computer monitors and HDTV sets. If proper playback considerations are not made, analog source played back on a digital device will look bad. And the opposite holds true as well, whereas digital source played back on an analog device can look bad. Digital source and playback devices are typically progressive, while analog is typically interlaced.

Playback on analog devices (television set): A television set is not the same as a computer monitor. A tv has exactly 525 scanlines (NTSC) on the complete tube, with a resolution of about 300/500×480. The amount of horizontal resolution depends on both the age and size of the tv set, with most of the 21-36 inch sizes carrying about 400 lines, whereas smaller sets with less resolution and larger ones with higher resolutions. Although a tv set can playback progressive source, it will not look as good as interlace source. A tv was made to playback interlaced analog source.

Playback on digital devices (computer monitor): A computer monitor has nothing in common with a television set. It’s refresh rate, resolutions and color-depth can be altered at will. A computer monitor is progressive rather than interlaced. While monitor can playback interlaced footage (often using DVD player software like PowerDVD or WinDVD), it will not look as good as playback on an analog device because varying de-interlace playback filters must be used. The computer is a progressive interface and does best with progressive playback.

What Does All This Mean?

Much of this page has been written in response to the bad habits so many novices seem to have, be it overkill on resolution and bit-rates, mishandling of interlace, or needlessly cropping and chopping video. Too many users insist on using the highest bit-rates and resolutions on their video project, insisting that is what it takes to make “top quality” or “professional-quality” work. It simply is not true. The information above shows the specs of video used in the video industry and on the devices in our own homes.

When working with video, carefully plan out the entire project, and ignore no details. Before tearing into that new software and clicking (or not clicking) on all the options, learn what they mean, and when to use or not use them. That’s really all there is to it.

This is by far the most time-consuming and in-depth page on the site. Donations have also compelled us to regularly update this page with the best possible information. Your dollars do go towards the continual advancement of pages such as these.